AI at the Edge Changes Everything

The best meeting room technology doesn’t serve the room. It serves the people in it.

The question worth asking about every piece of technology in your conference room: who is it actually serving?

It sounds obvious. Technology should serve people. But in the real world, conference room hardware and collaboration tools have long demanded the opposite. You find the seat with good lighting and remember to mute when you’re not talking. Someone takes notes. You repeat yourself because the microphone didn’t quite catch it. You start the meeting late because something didn’t connect properly.

The room works. But you’re working around it.

This is the problem Edge AI solves, not as a technical achievement, but as a human one.

When a device has enough local computing power to understand the room in real time, it can stop making people adapt to it. Instead, it can start adapting to them. The camera follows you. The lighting adjusts to your face. The notes take themselves. The friction disappears.

That’s the promise of the DTEN D7X AI. Not a smarter room, but a room that’s genuinely, actively working for the people inside it. It’s artificial intelligence infrastructure at the edge, designed from the hardware level up to think, see, and hear with a level of sophistication that no cloud-dependent device can match.

Why Edge AI Makes the Difference

To serve people in real time, technology has to keep up with how people actually behave: how they move, talk over each other, sit in bad light, and rarely stay perfectly framed in a camera shot. Processing that kind of complexity requires serious, dedicated compute. And where that compute lives determines everything.

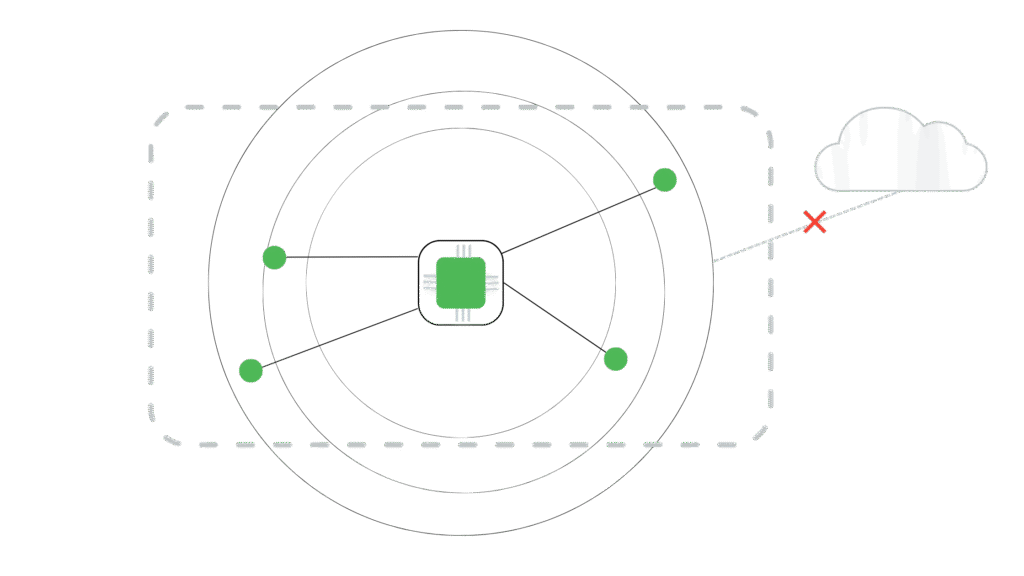

Cloud-dependent AI introduces latency. It creates dependency on network conditions. It offloads the most demanding processing, voice, video, and spatial mapping, to remote infrastructure and waits for a response. That limits how responsive and personalized the experience can be, because the intelligence is always one round trip away from the room.

Edge AI removes that ceiling.

When the compute is physically present in a device, the system can react at the speed of human behavior rather than the speed of a network request. It can be fast enough to track a presenter walking across the room. Precise enough to adjust lighting on an individual face without affecting the background. Sensitive enough to isolate a single voice from 30 feet away in a noisy environment. The result is technology that stops being something you manage and starts being something that quietly handles everything for you.

What that Looks Like In Practice

The foundation of everything DTEN does on the D7X AI is the AI Camera Module: a 48-megapixel main camera working in tandem with dual depth-sensing cameras that use Vision Transformer technology to continuously map the room’s geometry in three dimensions. This runs constantly in the background without ever interrupting your meeting feed. It’s the reason every other intelligent feature on the device is possible.

- The camera follows you, not the other way around. Because the system understands the room in three dimensions, it knows exactly where every participant is at all times. Speaker tracking means presenters can walk to the whiteboard, pace the room, or move naturally without ever thinking about the camera. The framing stays smooth and centered automatically. No one has to plant themselves in a chair to stay in frame.

- The lighting adjusts to you, not the room. Conference rooms are notoriously difficult environments for cameras. Bright windows can create harsh backlighting. Overhead lights favor some seats and not others. Participants with different skin tones are rarely served equally by a single global exposure setting. DTEN’s AI identifies each face individually in 3D space and applies brightness and exposure corrections directly, independent of the background. Everyone looks like they’re sitting in good light, regardless of where they actually are.

- The meeting stays focused even in imperfect spaces. In open offices or glass-walled rooms, cameras can be easily distracted by movement outside the meeting zone. Smart Boundary uses depth data to create an invisible perimeter around the active meeting area, so hallway traffic and passing colleagues never disrupt the frame. The technology adapts to the space, not the other way around.

- The audio works so participants don’t have to think about it. The D7X AI’s 15-element beamforming microphone array isolates speech from up to 30 feet away, while the AI continuously suppresses echo and background noise. Since beamforming can estimate where sound is coming from but can’t reliably determine who is speaking, voiceprint technology is also used so the system can build a persistent, reliable profile of each individual in the room.

This is what makes the D7X AI’s multi-sense approach unique: it helps to establish an identity for everyone in the room. Visual appearance provides the first anchor for identity. Spatial position derived from depth mapping and the system’s understanding of the room’s physical layout grounds that identity in place. Voiceprint recognition completes the picture. The result is a system that knows who is speaking. And that precision is what makes downstream AI workflows like transcription, meeting summaries, and real-time translation work as intended.

The Hardware Behind It

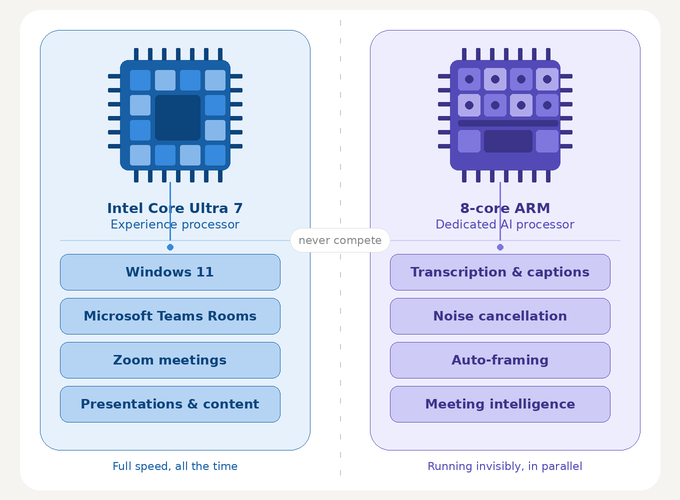

None of this is possible without the right foundation. The D7X AI runs on a dual-processor architecture: an Intel Core Ultra 7 CPU handling the full Windows 11 and Microsoft Teams Rooms or Zoom environment, paired with a dedicated 8-core ARM processor that handles AI workloads exclusively. The two never compete for resources. Meeting software and AI both run at full speed, simultaneously, all the time, with no external PC or hub required.

The separation matters because the intelligence isn’t a burden on the experience. The AI isn’t slowing down your call or stealing resources from your presentation. It’s running in parallel, doing its work invisibly, so that the people in the room never have to think about it.

A Room That Works for You

The D7X AI 55 is intelligent whether a formal meeting is running or not. For a whiteboard session, an impromptu huddle, or a solo brainstorm, the room is always on, always aware, and always ready. Not because that’s an impressive technical capability, but because the people using it shouldn’t have to think about whether the technology is ready. It just is.

That’s the real measure of edge AI done right. Not where the compute lives. Not how many megapixels the camera has. But whether the people walking into that room ever have to work around the technology or whether the technology, finally, works around them.